by James Clive-Matthews | 1 Apr, 2026 | Systems & Technology |

Bad photo of a good slide on what makes content valuable in an AI era, from Kevin Anderson at the inaugural Source Code event last night.

Bad photo of a good slide on what makes content valuable in an AI era, from Kevin Anderson at the inaugural Source Code event last night.

A successor to the much missed Hack/Hackers series looking at how tech and journalism can come together to do great things, it was unsurprisingly dominated by conversations about AI.

The point about what is valuable about the content we produce was also core to my old colleague Steven Wilson-Beales‘ session on SEO / GEO / AEO / AIO / whatever you want to call it, and what a “zero click” web could look like in practice.

Key points:

– You need differentiation

– You need to add value

– You need to be accessible, relevant, and credible

It’s almost as if E-E-A-T is still a thing!

Also, the lesson we should all have taken from the last decade and a bit of chasing search and social algorithms is simple – diversify.

Don’t get over reliant on any one traffic source. Don’t chase the algorithm, because the algorithm is changing faster than ever – and with AI search, will increasingly adapt it’s findings to every individual.

And a top tip – given AI tools have been trained on existing content, you need to take a careful look at your archives. If they don’t answer the potential needs of an AI bot in query fan out mode, they may need an update.

—

But the absolute key point – and this speaks to a lot of the work I’ve been doing behind the scenes lately – It’s no longer enough to focus your SEO / GEO efforts on optimisation of individual pages.

You need to see your content as part of a broader system – because the bots are no longer looking for just one page to rank at the top of a list, they’re looking for the right information to answer the query. If they can’t get it from you, they’ll get it from someone else. (Or just make it up…)

by James Clive-Matthews | 11 Feb, 2026 | Systems & Technology

This is a nice, neat summary of the core constraints of current LLM based AI when it comes to SEO/GEO (based on a much longer, more technical piece, if you want the details).

Back when ChatGPT 3.5 came out, I was telling anyone who’d listen that it was going to disrupt search and publishing.

In early 2024, while at PwC, I started pitching new content formats to address this – intended to help capture whatever the GenAI equivalent of search ranking was going to be. “GEO” before this label stuck (I was calling it AIO at the time).

My thinking then was based on what seemed to be a logical, structured approach – similar to the “query fan out” advocates you’ll see in the “GEO” space today. (Basically label the hell out of your content, anticipate and answer the questions your target audience is likely to ask, as that structure should help the AI understand the context more easily, and so encourage it to pull from your page rather than someone else’s. Effectively a slightly deeper version of an old school Q&A or FAQ piece…)

But as I dug deeper it soon became clear that the challenge with LLM-based GenAI (from a model visibility perspective) wasn’t to do with clarifying the intended meaning of the information you want the model to ingest and regurgitate, as I first thought. (“These things can process unstructured data, but they’ll process *structured* data easier – so let’s structure it for them.”)

Instead it’s that these systems – despite being called Large *Language* Models – don’t actually understand language, or context. “Logic” to them is a meaningless concept; not only that, they have no concept of what a concept even is.

—

Tokens aren’t words, and don’t have meaning independently – they only appear to have meaning when combined into words.

Tokens create the illusion of being words (and having meaning) because of the probabilistic nature of these tools, when working with them using language as the system interface. This creates an environment in which they’re working within the rules of language, so can produce output that makes sense – even if they don’t “understand” what they’re saying.

But URLs aren’t language, and don’t have linguistic rules or any consistency from site to site in terms of information architecture. Every site’s URL structure is similar, but different.

And as LLMs don’t really understand structure (except as recognisable, predictable patterns), this makes accurately relating URLs a significant challenge for current LLM-based GenAI tools.

—

This is a structural challenge, baked into the very nature of these models. Despite what many GEO “experts” are now claiming, if your goal is to generate links and traffic from GenAI results, it’s not going to be an easy one to engineer if you’re working from outside that system.

It may be possible to tweak model outputs to improve this and increase URL attribution accuracy, but a) it won’t remove the underlying structural constraints, and b) what would be the incentive for the GenAI companies to do this?

The dust has yet to settle on this one.

by James Clive-Matthews | 30 Nov, 2024 | Systems & Technology

Fascinating, if predictable, findings on ChatGPT source attribution, via TechCrunch – with significant implications for the emerging “Generative Engine Optimisation” successor to SEO that should concern anyone publishing online.

Short version – ChatGPT’s ability to provide accurate citations for the sources of its information remains extremely hit and miss, despite the rise of GenAI search:

“the fundamental issue is OpenAI’s technology is treating journalism ‘as decontextualized content’, with apparently little regard for the circumstances of its original production”

In other words, GenAI focuses on the substance, not the source. It doesn’t matter where a story / insight actually originated – only where the GenAI tool considers is most plausible for it to have originated.

This isn’t just a question of lost traffic due to the lack of a link – there are far more serious implications here.

For example, if you’re a corporate brand producing a big chunky piece of thought leadership based on months of research, this means you could find your work misattributed to a direct competitor if the GenAI algorithms decide a competitor is more likely to have produced something like this. Equally, someone else’s work – or opinion – may be attributed to you.

This is, of course, a potentially huge liability for any brand – especially as hostile actors could use this flaw in the way these tools work to game the system, similar to the old days of Googlebombing, and make it look like your brand has said something it hasn’t.

But it gets worse – there’s nothing* you can do about it:

“Nor does completely blocking crawlers mean publishers can save themselves from reputational damage risks by avoiding any mention of their stories in ChatGPT. The study found the bot still incorrectly attributed articles to the New York Times despite the ongoing lawsuit, for example.”

Welcome to the age of GenAI…

(* well, nothing guaranteed to work all the time, at least…)

by James Clive-Matthews | 15 Aug, 2024 | Systems & Technology

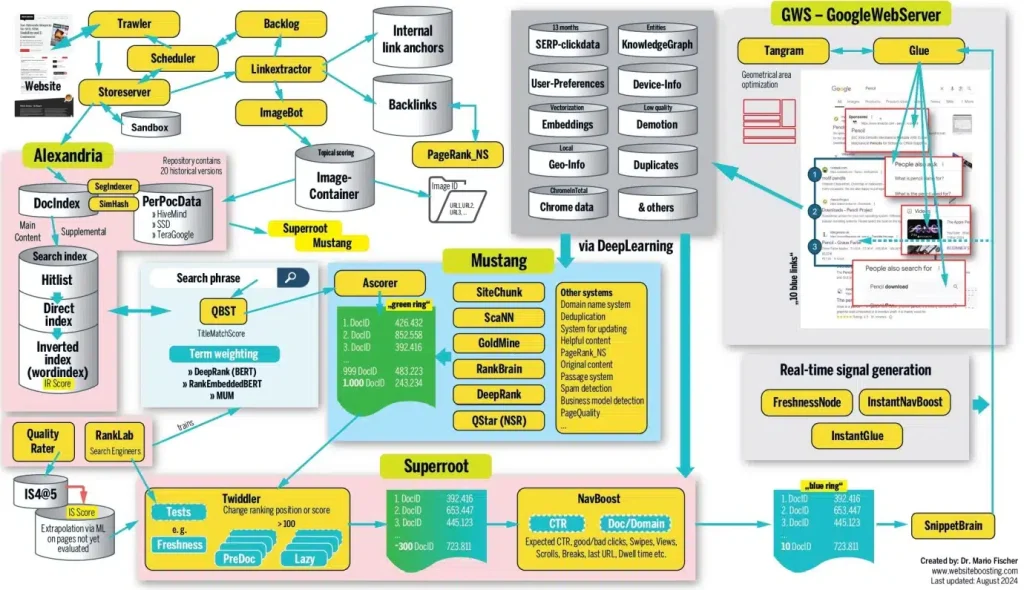

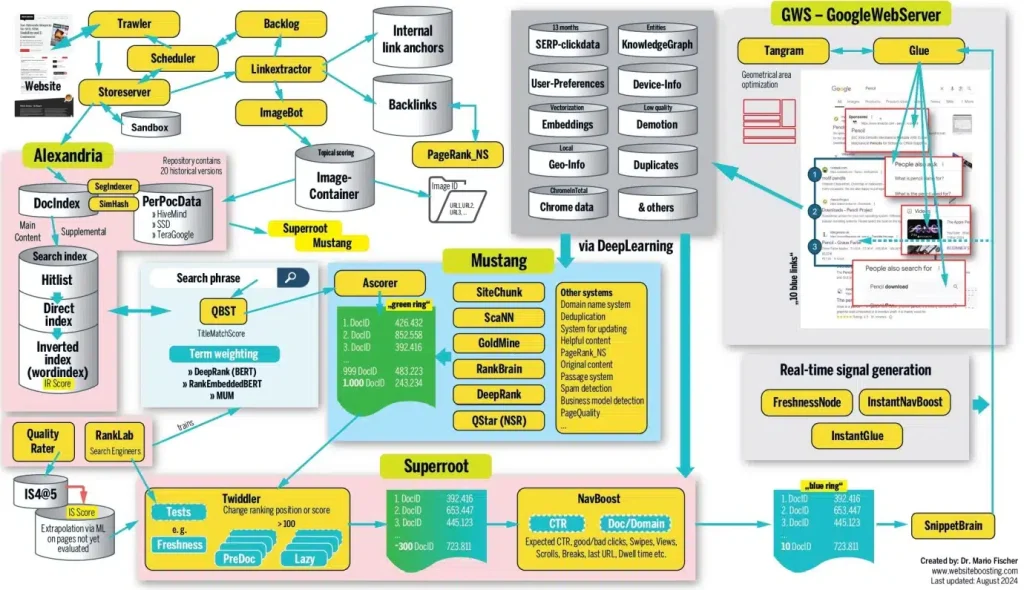

There’s some fascinating stuff in this SEO long read, based on impressive research and analysis. Just bear in mind that, as leaked Google documents put it, “If you think you understand how [search algorithms] work, trust us: you don’t. We’re not sure that we do either.”

To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do.

To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do.

For me, this all confirms a few core content principles:

- Context is king, not content. You can have an amazing page full of astounding insight, but if it doesn’t clearly meet the needs of the user at that moment in time, it will go unviewed.

- Page structure is at least as important as substance – if (human and bot) audiences can’t quickly tell that your page is interesting and relevant, they’ll bounce.

- But don’t worry – the key to success is rarely going to be a single webpage. More important is the authority of the domain and brand.

- This means the impact of content is at least as much about cumulative brand building as it is immediate engagement. Think of the long tail, not just the short spike – and focus your content strategy on building this long-term growth over the short-term quick hit.

- Given so much about how this works is unknown, and so many factors are outside your control, it’s best not to over-think it. Follow all the advice SEO experts offer, and you’ll end up with something so over-engineered it’ll lose its coherence and flow. This will increase bounce rates.

So how to succeed?

Go back to basics: Focus on ensuring your content fulfills a clear audience need (ideally currently unmet by other sources), using language audiences are looking for, presented in ways audiences are likely to engage with, and with clear links to and from other relevant content to help both humans and bots understand its relevance within the broader context.

In other words, SEO may be complex when you dig into the details – but it’s really just a combination of common sense, long-term authority building, and a good bit of luck.

It’s still worth reading the whole thing, though.

by James Clive-Matthews | 15 Sep, 2020 | Systems & Technology

This long piece neatly sums up the paradox of the age of algorithmic analytics:

“Algorithms that tell us which topics are trending don’t merely reflect trends; they can also help create them…

“The internet has shown us that the oddest of subcultures and smallest of niches can develop followings… I don’t think readers weren’t interested. It’s that they were told not to be interested. The algorithms had already decided my subjects were not breaking news. Those algorithms then ensured that they would never be.”

This approach of following your analytics is a *terrible* content strategy. By pursuing a mass audience and popularity above all, same as everyone else, you’re doomed to lose your distinctiveness – and relevance to your true target audiences. Even though the algorithms supposedly love relevance above all, they’re still (usually) not sophisticated enough to identify your priority audiences among all those visits.

This is why we’re seeing so many traditional publications fail, and ad revenues collapse: They’ve all become alike, because the algorithms have told them all the same things. That’s made them less valuable, in terms of both price and utility.

Don’t get me wrong: audience analytics are essential. But you need to know how to read them – and their limitations.

by James Clive-Matthews | 7 May, 2020 | Systems & Technology

“I haven’t witnessed an update as widespread as this one since 2003,” says the author of this piece. Some sites are reporting 90% traffic drops, with even the likes of Spotify and LinkedIn apparently impacted. This is big.

What exactly has changed is still unclear – a few days on results are still fluctuating too much for detailed analysis – but one thing does seem certain: “there are multiple reports of thin content losing positions”.

This has been the trend with Google for a while now, with the firm recommending “focusing on ensuring you’re offering the best content you can. That’s what our algorithms seek to reward.”

What *is* good content in this context? After all, “quality” is quite a subjective concept.

Well, algorithms aren’t people, but Google’s long been aiming to make their code more intelligent, and better able to understand context and likely relevance. Keyword stuffing has been penalised for years, as have dodgy link-building efforts. Instead, Google is aiming for near-human levels of appreciation of nuance.

Helpfully, though, Google has also put out a list of questions to help you understand if the content of your site is likely to be seen as quality in the eyes of the all-powerful algorithm:

- Does the content provide original information, reporting, research or analysis?

- Does the content provide a substantial, complete or comprehensive description of the topic?

- Does the content provide insightful analysis or interesting information that is beyond obvious?

- If the content draws on other sources, does it avoid simply copying or rewriting those sources and instead provide substantial additional value and originality?

- Does the headline and/or page title provide a descriptive, helpful summary of the content?

- Does the headline and/or page title avoid being exaggerating or shocking in nature?

All good questions, and all from Google’s own blog.

by James Clive-Matthews | 29 Feb, 2020 | Marginalia

This is a decent short piece in Inc. about Oprah Winfrey’s podcast strategy – basically mining her archive of TV shows for audio highlights – with some simple yet sensible advice for this age of ephemeral experiences:

“Good content is good content. No matter how old it is… Get creative and find ways to adapt that content to be relevant for… new audiences, and put it in front of them.”

That “get creative” part is key, though. Older content is likely to only have nuggets of still-relevant gold that will need careful mining and potentially refining for different formats, audiences, and purposes.

Remember: Not everything has to be explicitly about today’s perceived front-of-mind issues to be relevant and interesting. There’s a reason Dale Carnegie continues to be a bestselling author in the business books category 75 years after his death. Good insights are good insights.

Approached with the right mindset, old white papers, transcripts of conference speeches, case studies, surveys – even LinkedIn posts – could become a treasure trove of inspiration for creating something similar but different to engage new people on new platforms and in new formats.

Content marketing is, after all, about effective presentation of the content as well as the brand. And content ultimately succeeds based on *its* content – ideas and their presentation.

And there is *always* more than one way to present an idea.

by James Clive-Matthews | 24 Aug, 2014 | Marginalia

* assuming you don’t read anything else about clickbait today

This article focuses on content produced by content marketers, but applies just as much to regular publishers who are constantly trying to ride the latest wave of social media fads to suck in a few unsuspecting punters with low-rent, instantly-forgettable clickbait. Short, cheap, trend-driven / fast-turnaround content may well help you hit short-term engagement metrics, but will long-term kill audience retention:

“The internet is ballooning with fluff, and bad content marketing is to blame. In our obsession with ‘engaging’ our ‘audience’ in ‘real-time’ with ‘targeted content’ that goes ‘viral,’ we are driving people insane… When a publishing agenda is too ambitious, people can’t afford to shoot anything down… They’re under too much pressure to fill… slots”

I particularly like the concept of “click-flu” – the sense of annoyance and disappointment you get (both with the content and, more importantly, with yourself) when you click on a clickbaity link, and the page you end up on fails to deliver on its hyperbolic promise. The resentment builds and builds – and over time, leads to hatred of the people who lured you in time and again.

If you make a big promise, as so many of these “This is the most important thing you will see today” clickbaity headlines do, you’d damned well better live up to it.

by James Clive-Matthews | 21 Aug, 2014 | Marginalia

People are starting to fully wake up to this now – in the mobile-first age, competitors are no longer just other publishers, it’s *everything*, so we all need to start thinking bigger. Good piece as ever from Mathew Ingram on Gigaom:

“very few news apps take advantage of the qualities of a smartphone — things like GPS geo-targeting, which could use the location of a reader to augment the information they are getting, the way the Breaking News app does. Or the brain inside the phone itself, which could compute how long it took a reader to get through a story, how many times they returned to it, what other news they’ve been consuming, and so on”

A number of sites and apps have started to do *some* of this, but very few have managed to pull it all together. Give it a couple of years, and we may finally have a *properly* disruptive news delivery system that combines the best of everything. Combined with increasingly intelligent algorithms and reams of data on individual user preferences, this could get rid of the need for editor selecting stories altogether. But despite ongoing experiments in code-written stories, to do this really well will still take humans producing the copy and vetting the info. The journalist isn’t obsolete yet.

by James Clive-Matthews | 15 Jun, 2014 | Systems & Technology

“Know your enemy” – the first rule of everything competitive. But we’re mostly doing it wrong – speaking with my MSN hat on, it’s all too easy to fall into the trap of assuming that our main competition is Yahoo, Buzzfeed or the Huffington Post, and base strategy on what they are/aren’t doing to get ahead of the competition.

But if you’re in publishing, no matter what kind, your competition isn’t other publishers – it’s anything and everything that competes for your audience’s time and attention. And this is only getting more obvious for anyone in the online world now that mobile is one of the key entrypoints for news.

What do we use mobile phones for? Communication, obviously. Information, naturally. But mostly? Proscrastination. Have a few minutes to kill waiting for a bus, for someone to turn up for a meeting, for the line and the checkout to run down, and what are we all doing? Pissing about on our phones. Some read ebooks, some play games, some do work, some watch videos, some learn a language, some catch up on the news and lastest gossip, look for lifestyle tips, browse recipes, check holiday destinations – all the other stuff that broad-catchment websites like the one I work on offer up to attract readers.

Even news itself is as much about wasting time as it is about getting information – because, let’s face it, most news doesn’t directly affect most people. Even the most horrific news – terrorist attacks, mass shootings, kidnappings, wars and natural disasters – only directly affect the tiniest fraction of our audiences. They are effectively entertainment to readers – macabre entertainment, perhaps, but entertainment nonetheless. Diversions from their daily lives. Time-wasters.

It’s obvious once you realise it, but it still seems strange to hear the managing editor of the Financial Times name Candy Crush as the paper’s main competitor.

So we as news publishers need to think about how we make *our* product the most attractive time-waster:

– Is it snackable enough?

– Is it engaging enough?

– Will it keep me coming back for another hit like those addictive game apps?

– Do I get any rewards or points or prizes?

– Does it give me things I can share with my friends to show off or entertain them?

– Is it respectable enough that I wouldn’t mind the people behind me on the bus seeing what I’m looking at?

– Is it always fresh?

– Does it have depth to dig deeper if I want to, or does it simply finish and leave me with nothing to do?

– How long will it entertain me for?

These questions are the same for games as they are for media. As everyone carries on catching up with the concept of mobile first, we need to keep reminding ourselves that the questions are the same no matter what kind of mobile product you’re creating.

by James Clive-Matthews | 14 Jun, 2014 | Structures & Models

Fascinating, thought-provoking piece – another of those ones you come away from thinking “damn, that’s so obvious – why didn’t I make the connection before?” A few highlights:

Quality doesn’t mean popularity:

every single newspaper that I talk with. They are saying the same thing, which is that their journalistic work is top of the line and amazing. The problem is ‘only’ with the secondary thing of how it is presented to the reader.

And we have been hearing this for the past five to ten years, and yet the problem still remains. There is a complete and total blind spot in the newspaper industry that, just maybe, part of the problem is also the journalism itself.

Instead, they move the problem out of the editorial room, and into separate and isolated ‘innovation teams’… who are then charged with coming up with ideas for how to reformat their existing journalistic product in a digital way.

But let me ask you this. If The NYT is ‘winning at journalism‘, why is its readership falling significantly? If their daily report is smart and engaging, why are they failing to get its journalism to its readers?

If its product is ‘the world’s best journalism‘, why does it have a problem growing its audience?

Newspapers (and all-in-one-place sites) are an outdated concept:

No matter how hard they try, supermarkets with a mass-market/low-relevancy appeal will never appear on a list of the most ‘engaging brands’, or on list of brands that people love.

And this is the essence of the trouble newspapers are facing today. It’s not that we now live in a digital world, and that we are behaving in a different way. It’s that your editorial focus is to be the supermarket of news.

The New York Times is publishing 300 new articles every single day, and in their Innovation Report they discuss how to surface even more from their archives. This is the Walmart business model.

The problem with this model is that supermarkets only work when visiting the individual brands is too hard to do. That’s why we go to supermarkets. In the physical world, visiting 40 different stores just to get your groceries would take forever, so we prefer to only go to one place, the supermarket, where we can get everything… even if most of the other products there aren’t what we need.

It’s the same with how print newspapers used to work. We needed this one place to go because it was too hard to get news from multiple sources.

But on the internet, we have solved this problem. You can follow as many sources as you want, and it’s as easy to visit 1000 different sites as it is to just visit one. Everything is just one click away. In fact, that’s how people use social media. It’s all about the links.

One of clearest examples of this is how Washington Post is absolutely failing to engage people on YouTube. Every single day, they are posting a bunch of news videos about random things. Each video is well made (great production quality), but there is no editorial focus.

The result is this:

Here we have a large US newspaper that is barely reaching any people when it uploads a video to YouTube. And it’s not that the videos are uninteresting. There is one about iPhone cases that you can buy at the 9/11 museum (and the controversy of that), with only 687 views. There is a motivational speech (usually a popular thing to post on YouTube), with only 819 views. We have social tactics, like “5 awkward political fundraising moments”, with only 101 views.

Then we have a video by the super-popular George Takei that we all know from Star Trek. This is a person with millions of fans, but his video on Washington Post only attracted 844 views… in two weeks! If this had been posted by any Star Trek focused channel, this very same video would have reached 50,000 views, easy!

What the Washington Post is doing can only be described as a complete and total failure. It cannot get any worse than this.

To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do.

To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do.