by JCM | 27 Nov, 2025 | Narratives & Meanings

If you’re happy with platitudinous banality for your “thought leadership”, GenAI is great!

The trouble is, this isn’t just a GenAI issue.

Many (most?) brands have been spewing out generic nonsense with their content marketing for as long as content marketing has been a thing.

Because what GenAI content is very good at exposing is something that those of us who’ve been working in content marketing for a long time have known since forever: Coming up with genuinely original, compelling insights is *incredibly* hard.

Especially when the raw material most B2B marketers have to work with is the half-remembered received wisdom a distracted senior stakeholder has just tried to recall from their MBA days in response to a question about their business strategy that they’ve probably never even considered before.

And even more especially when these days many of those senior stakeholders are asking their PA to ask ChatGPT to come up with an answer for the question via email rather than speak with anyone.

If you want real insight that’s going to impress real experts, you need to put the work in, and give it some real thought. GenAI can help with this – I have endless conversations with various bots to refine my thinking across dozens of projects. But even that takes time. Often a hell of a lot of time.

Because even in the age of GenAI, it turns out the project management Time / Cost / Quality triangle still applies.

And you still only get to pick two.

{Post sparked by a post about how NotebookLM can now produce entire, quite decent-seeming slide decks, based on a few prompts)

by JCM | 12 Nov, 2025 | Marginalia

GenAI content is neither good nor bad:

GenAI content is neither good nor bad:

– Bad AI content is bad.

– Good AI content is good.

We were having the same arguments 20 years ago about blog content from actual humans.

The problem is not with how the sausage is made but, as Sturgeon’s Law states, that “Ninety percent of everything is crap”.

(Of course, on Linkedin this quite simple – and surely obvious – statement led to lots of debate about the *ethics* of AI content rather than the quality. That’s a different matter altogether…)

by JCM | 28 Oct, 2025 | Systems & Technology

“45% of the AI responses studied contained at least one significant issue, with 81% having some form of problem”

I’m a big fan of using GenAI to assist in research, ideation, and even sense-checking – asking it to help me with my own critical and lateral thinking. I use these tools multiple times a day, and am constantly encouraging the journalists I work with at Today Digital o use GenAI more to help them boost both their productivity and the impact of their work.

But it’s *vital* to keep fully aware of GenAI’s limitations when using it for anything where facts are important.

No matter how often we remind ourselves that LLMs have no true understanding, no real intelligence, no concept of what a “fact” actually is, the more you use them the easier it is to be taken in by their very, very convincing pastiche of true intelligence.

As this Reuters study shows, despite the apparent progress of the last couple of years, there are still fundamental challenges – which are unlikely to ever be fully overcome using this form of AI. (And which is why LLMs weren’t even classified as AI until very recently…)

The good news? With GenAI’s limitations increasingly becoming more widely appreciated, this could ultimately be a good thing for news orgs – because why go to an unreliable intermediary when you can go direct to the journalistic source?

Journalistic scepticism and fundamental critical thinking skills are becoming more important than ever.

by JCM | 10 Oct, 2025 | Systems & Technology

The rhythms and tone of AI-assisted writing are now pretty much endemic on LinkedIn

And I get why: GenAI copy is generally pretty tight, pretty focused, and flows pretty well. Certainly better than most non-professional writers can manage on their own.

Hell, it sounds annoyingly like my own natural writing style, honed over years of practice…

But people I’ve known for years are starting to no longer sound like themselves.

Their words are too polished, too slick, too much like those an American social media copywriter would use, no matter where they’re from.

None of this post was written with AI.

And despite (because of?) being a professional writer/editor, It took me over half an hour of questioning myself, rewriting, starting again, looking for the right phrase. Doing this on my phone, my thumbs now ache and the little finger on my right hand, which I always use to support the weight while writing, is begging for a break.

With GenAI I could have “written” this in a fraction of the time, and it would have been tighter, easier to follow.

But it wouldn’t have been me – and I still (naively) want my social media interactions to be authentically human to human.

(Of course, the AI version would probably have ended up getting more engagement, because this post – as well as going out on a Sunday morning when no one’s looking, and without an image – is now far too long for most people, or the LinkedIn algorithm, to give it much attention. Hey ho!)

by JCM | 28 Sep, 2025 | Structures & Models

I’ve seen this piece shared a lot, and like it. I’ve long been a fan of Systems Thinking (check my bio, it’s at the heart of my approach to everything).

I’ve seen this piece shared a lot, and like it. I’ve long been a fan of Systems Thinking (check my bio, it’s at the heart of my approach to everything).

But I’ve always seen Systems Thinking as more of a mental model or reminder to look beyond the immediately obvious causes and effects that could impact a strategy, rather than an enjoinder to try and literally map out interactions between all the different components.

As this piece notes, if you try to map out every interaction in a complex, shifting, uncertain system, you’ll never succeed. There are too many variables, all changing. Complexity Theory – even Chaos Theory and the Heisenberg Uncertainty Principle – rapidly becomes more helpful. Only these usually aren’t of much *practical* help at all.

It’s like playing chess – you don’t bother mapping out ALL the possible moves, as that would take forever (look up the Shannon number to get a sense of how many there could be – it’s more than the number of atoms in the observable universe…), and is therefore useless.

With experience, good chess players (and good strategists) can rapidly, intuitively home in on the moves most likely to work – both now and several moves down the line.

The problem is that the same moves will rarely work twice – at least not against the same opponent. And in a complex, ever-changing system, you’ll rarely have the opportunity to make the same sequence of moves more than once anyway, as the pieces will be constantly changing position on the board. Which will also be constantly changing size and shape.

“But metaphor isn’t method.”

That’s the key line from the linked piece. Business strategy isn’t chess – because you’re not restricted to making just one move at a time, or moving specific pieces in specific ways.

The challenge is to keep as flexible as possible while still moving forwards, which is why this bit of advice – one line of many I like, especially when combined with the recommendation to design in a modular, adaptive way – is one I pushed (sadly unsuccessfully) in a previous role:

“Instead of placing one big bet, leaders need a mix of pilots, partnerships, and minority stakes, ready to scale or abandon as conditions change.”

The problem is that strategy decks – still at the heart of most businesses and almost every marketing agency – are intrinsically linear, despite trying to address nonlinear, complex systems.

This is why most strategies end up not really being strategies, but plans, or lists of tactics.

And thats why most “strategies” fail.

Don’t focus on the *what* – focus on the *how*. Great advice from my former boss Jane O’Connell, which took me a long time to truly understand. It’s a concept that’s core to this excellent piece – and incredibly hard to explain.

Have a read – and a think.

by JCM | 30 Aug, 2025 | Narratives & Meanings

This:

The question of what AI does to publishing has much more to do with why people are reading than how you wrote. Do they care who you are? About your voice or your story? Or are they looking for a database output?

Benedict Evans, on LinkedIn

Context is (usually) more important to the success of content than the content itself. And that context depends on the reader/viewer/listener.

It’s the classic journalistic questioning model, but about the audience, not the story:

- Who are they?

- What are they looking for?

- Why are they looking for it?

- Where are they looking for it?

- When do they need it by?

- How else could they get the same results?

- Which options will best meet their needs?

Every one of these questions impacts that individual’s perceptions of what type of content will be most valuable to them, and therefore their choice of preferred format / platform for that specific moment in time. Sometimes they’ll want a snappy overview, other times a deep dive, yet other times to hear direct from or talk with an expert.

GenAI enables format flexibility, and chatbot interfaces encourage audience interaction through follow-up Q&As that can help make answers increasingly specific and relevant. This means it will have some pretty wide applications – but it still won’t be appropriate to every context / audience need state.

The real question is which audience needs can publishers – and human content creators – meet better than GenAI?

It’s easy to criticise “AI slop” – but the internet has been awash with utterly bland, characterless human-created slop for years. If GenAI forces those of us in the media to try a bit harder, then it’s all for the good.

by JCM | 24 Jul, 2025 | Systems & Technology

The Tragedy of the Commons is coming for the internet:

Google’s AI Is Destroying Search, the Internet, and Your Brain

404 Media, 23 July 2025

The GenAI equivalent of Googlebombing (remember that?) was one of my first concerns when pondering the likely impact of GenAI search, way back when ChatGPT 3.5 came out and the prospect started looking real.

This kind of thing is, sadly, inevitable. And while Google’s got very solid experience of getting around attempts to manipulate its algorithms, it doesn’t have a great track record of releasing AI products that can distinguish facts from confabulations (remember both the Bard and the Gemini launches?).

The other inevitability is that this is also going to lead to more scammy marketing techniques. We’re going to be inundated with yet more of those snake oil salespeople popping up to promise brands results in GenAI, just as they used to in the early days of SEO – fuelled by similar tactics of vast networks of websites all interlinking to each other to create the impression of authority.

Only now, rather than using underpaid humans in content farms, they’ll be using GenAI to spit out infinite copy and infinite webpages, poisoning the GenAI well for everyone in pursuit of short-term profits.

by JCM | 21 Aug, 2024 | Systems & Technology

The default writing style of GenAI is becoming ever more prevalent on LinkedIn, both in posts and comments.

The default writing style of GenAI is becoming ever more prevalent on LinkedIn, both in posts and comments.

This GenAI standard copy has a rhythm that, because it’s becoming so common, is becoming increasingly noticeable.

Sometimes it’s really very obvious we’ve got bots talking to bots – especially on those AI-generated posts where LinkedIn tries to algorithmically flatter us by pretending we’re one of a select few experts invited to respond to a question.

—

Top tip: If you’re using LinkedIn to build a personal / professional brand, you really need a personality – a style or tone (and preferably ideas) of your own. If you sound the same as everyone else, you fade into the background noise.

So while it may be tempting to hit the “Rewrite with AI” button, or just paste a question into your Chatbot of choice, my advice: Don’t.

Or, at least, don’t without giving it some thought.

—

There are lots of good reasons to use AI to help with your writing – it’s an annoyingly good editor when used carefully, and can be a superb help for people working in their second language, or with neurodiverse needs. It can be helpful to spot ways to tighten arguments, and in suggesting additional points. But like any tool, it needs a bit of practice and skill to use well.

But seeing that this platform is about showing off professional skills, don’t use the default – that’s like turning up to a client presentation with a PowerPoint with no formatting.

Put a bit of effort in, and maybe you’ll get read and responded to by people, not just bots. And isn’t that the point of *social* media?

by JCM | 16 Aug, 2024 | Marginalia

This from John Hegarty resonated. Unpopular opinion, but awards – especially in B2B marketing – are the ad industry equivalent of social media vanity metrics. They may get you marginally more reach (usually long after the campaign’s over), but rarely with your real target audiences.

This from John Hegarty resonated. Unpopular opinion, but awards – especially in B2B marketing – are the ad industry equivalent of social media vanity metrics. They may get you marginally more reach (usually long after the campaign’s over), but rarely with your real target audiences.

What’s worse, the positive signals award wins send out can create feedback loops of groupthink about tactics that can actively harm your ability to deliver.

I know it’s tough to demonstrate marketing effectiveness, but award wins rarely prove much beyond that marketing people like something. So unless you’re selling to marketers, they don’t really have much value.

This means awards make perfect sense for agencies (and individuals) to enter – but for their clients? The point of marketing is to improve brand perception and make sales with your buyers, not getting a round of applause from other marketers.

Which is why, often, I find the less glamorous side of marketing is where the real businesses impact can be found.

by JCM | 15 Aug, 2024 | Systems & Technology

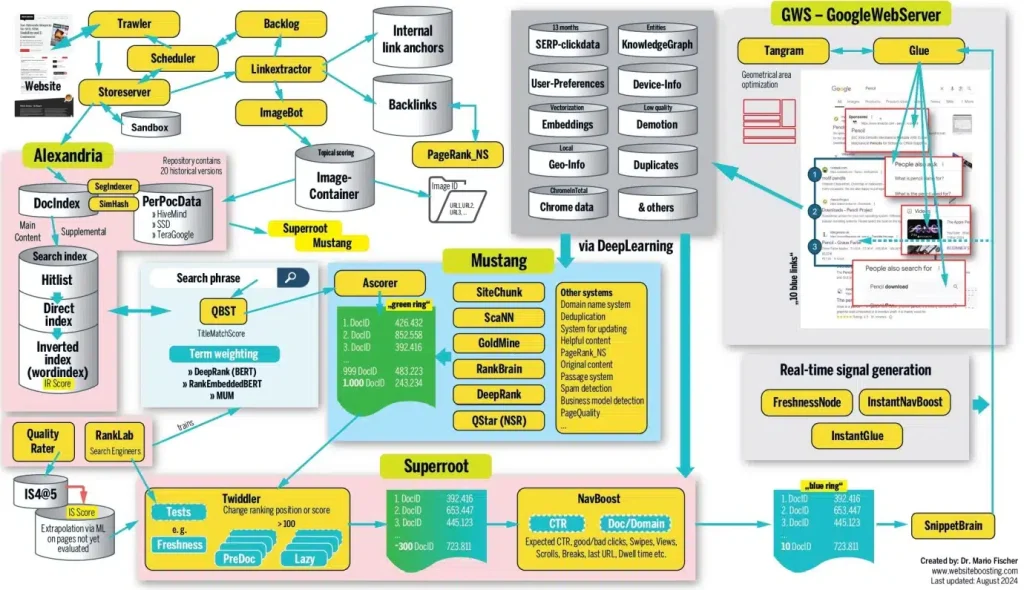

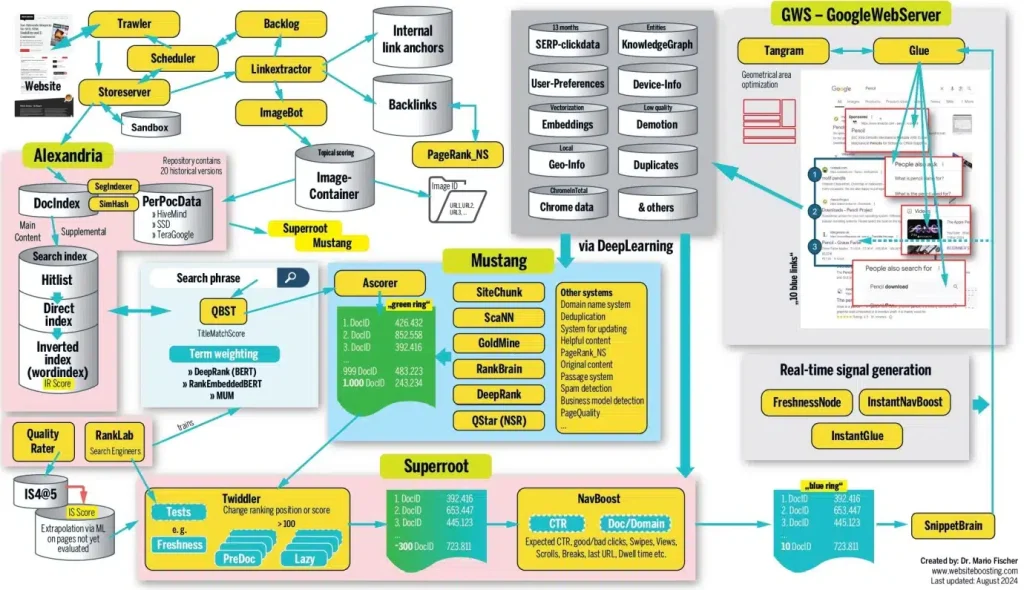

There’s some fascinating stuff in this SEO long read, based on impressive research and analysis. Just bear in mind that, as leaked Google documents put it, “If you think you understand how [search algorithms] work, trust us: you don’t. We’re not sure that we do either.”

To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do.

To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do.

For me, this all confirms a few core content principles:

- Context is king, not content. You can have an amazing page full of astounding insight, but if it doesn’t clearly meet the needs of the user at that moment in time, it will go unviewed.

- Page structure is at least as important as substance – if (human and bot) audiences can’t quickly tell that your page is interesting and relevant, they’ll bounce.

- But don’t worry – the key to success is rarely going to be a single webpage. More important is the authority of the domain and brand.

- This means the impact of content is at least as much about cumulative brand building as it is immediate engagement. Think of the long tail, not just the short spike – and focus your content strategy on building this long-term growth over the short-term quick hit.

- Given so much about how this works is unknown, and so many factors are outside your control, it’s best not to over-think it. Follow all the advice SEO experts offer, and you’ll end up with something so over-engineered it’ll lose its coherence and flow. This will increase bounce rates.

So how to succeed?

Go back to basics: Focus on ensuring your content fulfills a clear audience need (ideally currently unmet by other sources), using language audiences are looking for, presented in ways audiences are likely to engage with, and with clear links to and from other relevant content to help both humans and bots understand its relevance within the broader context.

In other words, SEO may be complex when you dig into the details – but it’s really just a combination of common sense, long-term authority building, and a good bit of luck.

It’s still worth reading the whole thing, though.

by JCM | 5 Aug, 2024 | Systems & Technology

Given the music industry’s track record of building successful cases for unauthorised sampling and even inadvertent plagiarism (aka Cryptomnesia, as with the George Harrison ‘My Sweet Lord’ lawsuit back in the 70s) this will be the one to watch.

Given the music industry’s track record of building successful cases for unauthorised sampling and even inadvertent plagiarism (aka Cryptomnesia, as with the George Harrison ‘My Sweet Lord’ lawsuit back in the 70s) this will be the one to watch.

The music industry’s absolutist approach to copyright is a dangerous path to follow, however. How can you legally define the difference between “taking inspiration from” and “imitating”? What’s the difference between a GenAI tool creating music in the style of an artist, and an artist operating within a genre tradition?

*Everything* is a mashup or a reference, to a greater or lesser extent – that’s how culture works. We’re all standing on the shoulders of giants – as well as myriad lesser influences, most of which are subconscious. Hell, the saying “there’s nothing new under the sun” comes from the Book of Ecclesiastes, written well over 2,000 years ago.

Put legal restrictions on the right of anyone – human or bot – to build or riff on what’s come before, and culture risks hitting a dead end.

So while I have sympathy with artists’ concerns, the claim that GenAI could “sabotage creativity” is a nonsense in the same way claims that the printing press or photocopier could sabotage creativity are. Creativity is about the combination of ideas and influences and continual experimentation to find out what works – GenAI can help us all do this faster than ever. If anything, this should help increase creativity.

What *does* sabotage creativity is short-termist, protectionist restrictions on who’s allowed to do what – exactly like the ones these lawsuits are trying to impose.

by JCM | 1 Aug, 2024 | Systems & Technology

“When AI is mentioned, it tends to lower emotional trust, which in turn decreases purchase intentions.”

“When AI is mentioned, it tends to lower emotional trust, which in turn decreases purchase intentions.”

An interesting finding, this – especially as it transcends product and service categories – though perhaps to be expected at this stage of the GenAI hype cycle.

This kind of scepticism isn’t easy to overcome – with new technologies acceptance and mass adoption is often a matter of time – but as the authors of the study point out, the key issue to address is the lack of trust in AI as a technology.

Some of this lack of trust is due to lack of familiarity – natural language GenAI seems intuitive, but actually takes a lot of practice to get decent results.

Some will be due to the opposite – follow the likes of Gary Marcus, and it’s hard not to get sceptical about the sustainability, benefits, and reliability of the current approach to GenAI.

The danger, though, is that this scepticism may be spreading to AI as a whole. The prominence of GenAI in the current AI discourse is leading to different types of artificial intelligence becoming conflated in the popular imagination – even though, just a few years ago, the form of machine learning we now call GenAI wouldn’t even have been classified as artificial intelligence.

Tech terms can rapidly become toxic – think “web3”, “NFT”, and “metaverse”. Could GenAI be starting to experience a similar branding problem? And could this damage perception of other kinds of AI in the process?

by JCM | 1 Aug, 2024 | Systems & Technology

The decline in news audiences reported here – 43%, or 11 million daily views – is shockingly high. This follows Canada’s ill-considered battle with Meta, which led to Meta pulling news from its platforms, including Facebook, in the Canadian market last year, rather than arrange content licensing agreements with news publishers.

The decline in news audiences reported here – 43%, or 11 million daily views – is shockingly high. This follows Canada’s ill-considered battle with Meta, which led to Meta pulling news from its platforms, including Facebook, in the Canadian market last year, rather than arrange content licensing agreements with news publishers.

This amply demonstrates the vast power these tech platforms have in society and over the media industry, and so justifies the Canadian government’s worries. But it also more than shows – once again – how utterly dependent the online content ecosystem is on these channels for distribution.

Meta/Facebook obviously isn’t a monopoly, but a 43% decline in news consumption thanks to the shutting down of one set of distribution channels? It’s a safe bet that much of the rest of the traffic will be from Google, so it’s more of a duopoly.

What impact is this level of reliance on a couple of gatekeeping tech platforms – who can change their policies on a whim at any time – going to have on public awareness of current events and society at large

Elsewhere in the article we have an answer: “just 22 per cent of Canadians are aware a ban is in place”.

Shut down access to news, little wonder that awareness of news stories stays low.

Both Canada (with Meta) and Australia (with Google and Meta) have tried forcing the tech giants into doing licensing deals for content that their platforms promote. In both cases, this has – predictably – backfired, and led to the opposite effect to that intended.

But what’s the solution?

This question is becoming more urgent now that GenAI is in the mix, and starting to provide summaries of stories rather than just provide a headline, image, and link.

Meta/Google were effectively acting like a newsstand – showing passing punters a range of headlines to attract their attention and pull in an audience.

GenAI’s summarisation approach, meanwhile, is much closer to what Meta and Google were being (unfairly) accused of doing by the Canadian and Australian governments: Taking traffic away from news sites by providing an overview of the story on their own platforms.

But the GenAI Pandora’s Box has already been opened. Publishers need to move away from wishful thinking – the main cause of the failed Australian/Canadian experiments – and back to harsh reality.

Unlike the Meta news withdrawal – which could be reversed – this new threat to content distribution models isn’t going away.

by JCM | 31 Jul, 2024 | Systems & Technology

“If your website is referenced in a Perplexity search result where the company earns advertising revenue, you’ll be eligible for revenue share.”

“If your website is referenced in a Perplexity search result where the company earns advertising revenue, you’ll be eligible for revenue share.”

How many qualifiers can be fitted into one sentence, all while providing next to no information?

To be clear, I’ve loved WordPress ever since I migrated my old blog to it [checks archives] *18* years ago [damn…] I also fully get why they’re doing this – some money is better than none, it may work out, and it may actually lead to more traffic / engagement / visibility for WordPress sites.

But this all feels a little like promises of scraps falling from the table of people who are getting scraps falling from an even higher table.

Perplexity currently claims to be making US$20 million from paid subscriptions to its pro service – about the only source of income it currently seems to have, despite its $2.5-3 billion valuation. If they’re now giving away some of that limited income, I can’t see an obvious path to profitability, given the hefty running costs of GenAI.

This doesn’t just go for Perplexity, but for all these GenAI tools:

- What’s the path to a sustainable content publishing-based business model (and all these GenAI companies are content companies) when being able to produce infinite content on demand means the traditional route for making money for these kinds of companies – advertising inventory – is also infinite?

- Value comes from scarcity. Content / as inventory is no longer scarce. How do you make something that’s not scarce seem valuable enough to get people to pay for it?

- And when all GenAI models offer more or less the same output, and more or less the same level of reliability, and successful features and approaches can be replicated by the competition in next to no time, how do you stand out from the crowd?

Being a content/tech geek I’ve been thinking about this a lot over the last couple of years. Perplexity’s approach is one I like (I did history at university, so I love a good list of sources, even if they’ve mostly just been added to make your work look more credible and most of them are irrelevant, as is often the case with Perplexity) – but I’m far from convinced it has money-making potential. As Wired has put it, Perplexity is a bullshit machine. How valuable is bullshit?

Basically, we’re firmly in the destruction phase of creative destruction. The creative part is yet to come

But still – at least the providers of the raw material these LLMs are so reliant on are starting to get thrown a few bones. That’s a step in the right direction – because as that recent Nature study made clear, the proliferation of AI-generated content risks surprisingly rapid synthetic data-induced model collapse.

Human-created content may no longer be king, but it remains vitally important. Without it – and a hefty dose of critical thinking – the whole system comes tumbling down.

by JCM | 16 Jul, 2023 | Marginalia

Fascinating long read, combining my old focus on film with my current one on tech, business, and society.

Core to this piece is a fundamental question: What is a fair wage in a digital era in which the connection between the effort and means of production and the business bottom line is utterly obscure?

Lots of interesting questions – not least of which is: Could Hollywood actors striking be a tipping point for AI awareness and regulation?

“SAG-AFTRA is one of the most well-known labor unions in the United States (everybody loves a celebrity). Partnering with WGA to draw a line in the sand over the AI threat to workers is a huge deal that I believe can benefit people in the many different industries beyond Hollywood that are facing the same existential danger that the technology presents. Precedents are important, and big wins on national platforms can help the little guys get what they deserve too.”

by JCM | 21 Nov, 2022 | Narratives & Meanings

“Telling stories should be a tool we use to understand ourselves better rather than a goal in and of itself.”

– from Beware the Storification of the Internet, in The Atlantic

This, for me, has always been the real value of trying to produce “Thought Leadership” in a business context: The process of thinking and constructing a coherent explanation of that thinking can have far more lasting impact on an organisation than the one-off piece of content that appears to be the end result.

Every stakeholder involved in the creation of the thought leadership content should, during its course, have at least a few moments where they really stop and question what they think and believe, why, and how they can better articulate it. This can then positively impact how they operate day to day, how they interact with clients and customers, and how they articulate the benefits of their products and services.

It’s not about the piece of content – it’s about the *thinking*.

*That* is the value of putting an emphasis on “Storytelling” – because the narrative form insists on forcing us into shaping our thoughts in ways others can follow. Ideally in a relatively entertaining, relatively memorable way.

The risk, though, is that we start buying into the myths of our own stories – and forget that they are just one way of looking at the world, created to simplify.

This is why, as we try to produce a piece of content, we need to do a Rashomon on our own thinking.

There’s never only one story, one narrative, one way of looking at the world. Look at things from only one perspective, and you risk ending up like the blind men and the elephant. If you’re serious about producing real thought leadership, you should challenge yourself to look for alternative approaches every time.

This is why Critical Thinking is probably the most important skill when writing and editing: Question your assumptions and preconceptions, consider all the objections and alternative interpretations, and – as long as you can avoid the twin traps of analysis paralysis and editing by committee – the end result *will* be stronger.

Stylistic flair can disguise sloppy thinking – but only so much. And how much better is it to have both style *and* substance?

by JCM | 12 Mar, 2021 | Systems & Technology

As the FT points out, big tech has so much data on us, surely ad targeting should be good by now?

The real solution to increasing your chances of reaching the right people isn’t marketing automation, it’s user experience. One’s a tactic, the other’s a strategy.

After all, if even Facebook struggles to identify audience interests with any degree of accuracy, what hope do more limited platforms have?

The risk isn’t just that you’re wasting your paid media spend on micro-targeting, it’s that you’re wasting your production budget producing multiple variants of marketing content for audiences that may never see your material. It’s lose-lose.

The magic bullet isn’t audience segmentation in promotion plans – it’s focusing on your audiences’ interests in the content and messaging development phase. This helps ensure what you’re saying (and how you’re saying it) can appeal to multiple target groups at the same time – from niche to broad. Then you can let your audiences self-select the next step on their customer journey via clear signposting of where to go to find what they want.

One size may not fit all perfectly, but with a skilled tailor one size can be given the *illusion* of fitting all. People will pay attention to the things they’re interested in, not the things they aren’t. Which makes people far more capable of deciding what’s relevant to them than any algorithm.

by JCM | 25 Nov, 2020 | Structures & Models

What’s your preferred approach for coming up with good ideas? This podcast from The Accidental Creative suggests there are four steps to true creativity:

1) Preparation

2) Incubation

3) Illumination

4) Verification

While everyone seems to focus on that Eureka moment of illumination / inspiration (I tend to get them in the middle of long walks, or while reading a totally unrelated book), and agencies often focus on the first (the mythical perfect brief), the second and fourth of these are actually the most vital.

The best creative ideas need deliberation, interrogation, to be stepped away from and ignored for a while, then returned to with fresh eyes. They need to be poked, questioned, critiqued, bounced off other people, sense-checked, confirmed as not having been done before – all that good due diligence of verification. But creativity can’t be rushed.

At least, that’s the theory.

Sometimes, a ridiculous deadline is *exactly* what we need – even if it’s one of our own making, caused by dawdling on stage two until the last possible moment, or prevaricating with other, less important tasks. I tend to do that more often than I’d care to admit.

But then, we’re all different. The truth is creativity doesn’t follow a set formula or. If it did, it wouldn’t be creative. What it needs is the right mindset.

by JCM | 25 Oct, 2020 | Systems & Technology

As I scroll through feeds filled with poor auto-cropping and shoddy machine-generated summaries, it’s hard to disagree with this:

“it is a myth that new innovations don’t need editorial oversight. If you’re going to build automated content curation without a sub-editor, you’re taking a needless risk. Just as editors need better algorithms, algorithms need better editors.”

Will AI eventually get good enough at contextual understanding and sense-checking to truly compete with humans’ ability to parse nuance, sarcasm, irony, and humour, as well as verify facts in a world where disinformation comes in a deluge? Possibly. But it’s still a long way off.

Makes it even more of a shame to see my old employer Microsoft / MSN recently ditch even more of its remaining human editors in favour of algorithms.

by JCM | 16 Sep, 2020 | Narratives & Meanings

Seeing this graphic doing the rounds. Pretty. Still, call me a cynic, but:

Seeing this graphic doing the rounds. Pretty. Still, call me a cynic, but:

1) [citation needed] – the full graphic lists multiple top-level sources, but without details – what were the exact sources? What was the methodology for identifying this data used by each of those sources? How credible is this information?

2) So what? What useful insight do these lump sums tell us without context? Most of the numbers are random, unrelated big figures, so how does this help us understand the world? What are the trends? What’s the insight?

This is superficially a great bit of marketing, as it’s getting shared a lot and is designed to promote a company flogging a data analytics platform. But there’s no further detail on their site, which is a masterclass in promising a lot (e.g. “Solve back-end integration of any data, at cloud scale, without moving data”) without actually saying or revealing anything about how their tools actually work. To find out more, you need to give them your contact details.

For true data geeks, as for ex-journalists like me, alarm bells start going off at this point:

– Data without context is meaningless

– Single data points don’t equal insight

– Data needs to be well sourced to warrant trust

– Don’t give away your data if you don’t know what you’re getting

GenAI content is neither good nor bad:

GenAI content is neither good nor bad: I’ve seen

I’ve seen  The default writing style of GenAI is becoming ever more prevalent on

The default writing style of GenAI is becoming ever more prevalent on  This from John Hegarty

This from John Hegarty To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do.

To save you time, the main lesson is that “achieving a high ranking isn’t solely about having a great document or implementing the right SEO measures with high-quality content”. Search results shift in near realtime based on thousands of utterly opaque, interconnected assessments of obscure demand and user intent signals, so there’s only so much website managers can do. Given the music industry’s track record of building successful cases for unauthorised sampling and even inadvertent plagiarism (aka Cryptomnesia, as with the

Given the music industry’s track record of building successful cases for unauthorised sampling and even inadvertent plagiarism (aka Cryptomnesia, as with the  “When AI is mentioned, it tends to lower emotional trust, which in turn decreases purchase intentions.”

“When AI is mentioned, it tends to lower emotional trust, which in turn decreases purchase intentions.” The decline in news audiences

The decline in news audiences “If your website is referenced in a Perplexity search result where the company earns advertising revenue, you’ll be eligible for revenue share.”

“If your website is referenced in a Perplexity search result where the company earns advertising revenue, you’ll be eligible for revenue share.” Seeing this graphic doing the rounds. Pretty. Still, call me a cynic, but:

Seeing this graphic doing the rounds. Pretty. Still, call me a cynic, but: